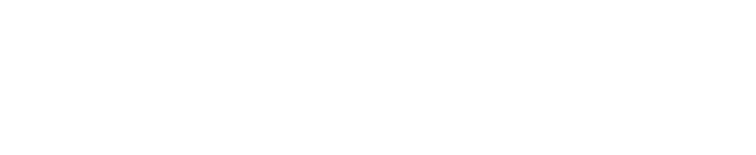

3D deep learning suffers from a cubic increase in memory. To address this problem, we have developed a data-adaptive framework, based on octrees, to make the processing much more memory-efficient.

3D deep learning techniques are notoriously memory-hungry, due to the high-dimensional input and output spaces. However, for most applications, not all areas of space are equally informative or important. In order to allow deep learning techniques to scale to spatial resolutions of 256³ and beyond, we have developed the OctNet framework [ ].

In contrast to existing models, our representation enables 3D convolutional networks which are both deep and high resolution. The data-adaptive representation using unbalanced octrees allows us to focus memory allocation and computations to the relevant dense regions.

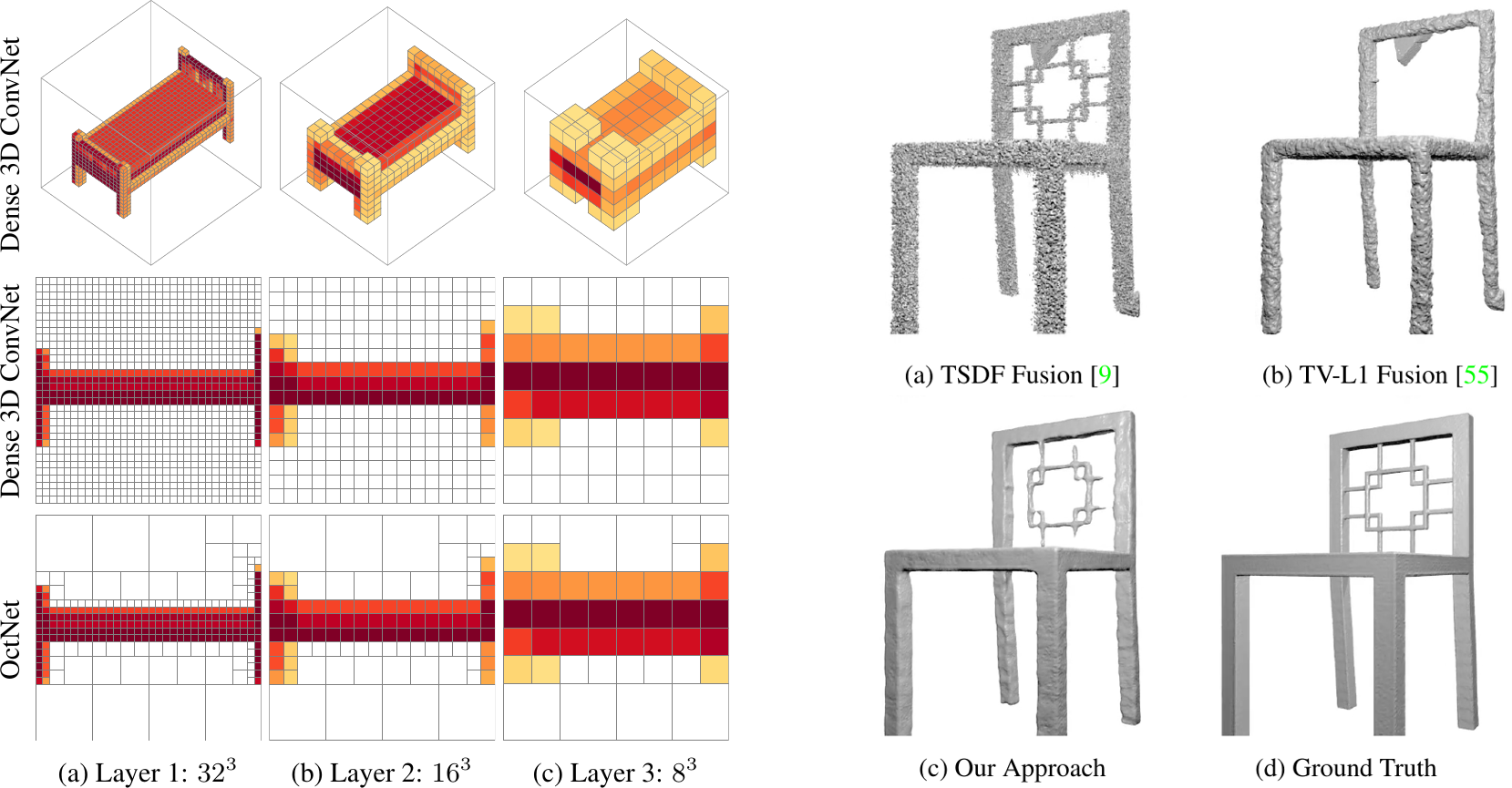

With OctNetFusion [ ], we present a learning-based approach to depth fusion, i.e. to dense 3D reconstruction from multiple depth images. We present a novel 3D CNN architecture that learns to predict an implicit surface representation from the input depth maps, and is additionally able to infer the structure of the octrees representing the objects at inference time.

We have published the code for both OctNet and OctNetFusion: